The era of AI video is shifting from novelty to utility. This change is driven by powerful Image-to-Video (I2V) models like Stable Video Diffusion, Runway Gen-2, and Kling AI. These tools allow creators to turn a single, high-quality still image into a dynamic video clip.

However, the primary hurdle remains the “Consistency Problem.” Faces flicker. Objects randomly change color. Lighting shifts unnaturally between frames. The solution isn’t just better models. It requires a structured, professional workflow that puts image creation first.

Never Start with Text: Why the Text-to-Video Method Fails Consistency

The initial hype around generative video focused on Text-to-Video (T2V), where you simply type a prompt (e.g., “A knight standing in a field of fireflies”) and the AI generates the clip. While revolutionary, this method is inherently prone to inconsistency:

- Lack of Visual Anchor: The AI has no fixed starting point. It interprets the text prompt and creates an initial frame from scratch. The elements, composition, and lighting are chosen randomly within the model’s learned parameters.

- Character/Asset Drift: Without a fixed reference, AI struggles to maintain identity during motion. Faces morph and outfit details fluctuate, breaking consistency.

- Client Approval Risk: The inability to pre-approve specific branding or lighting leads to expensive, endless re-generation cycles to meet client standards.

Modern, professional workflow for AI filmmaking flips this on its head, prioritizing the Image-to-Video (I2V) approach.

“First Frame” Technique: Establishing Your Visual Contract

The most critical step in solving the consistency problem is to treat your source image as the visual contract for the entire video. This technique ensures that the AI’s starting point is fixed, high-quality, and pre-approved.

Step 1: Generate the Perfect Still Image

Instead of generating video, use a dedicated, powerful AI Image Generator (like Midjourney, Leonardo AI, or Stable Diffusion) to create the first frame.

- Lock in Details: Craft a master image that defines every element—character, attire, lighting, and aesthetic—down to the pixel.

- Approval and Alignment: Once the still image is perfect, it can be shared with stakeholders for final approval on branding, color palette, and composition. This locks in the core visual identity before animation begins.

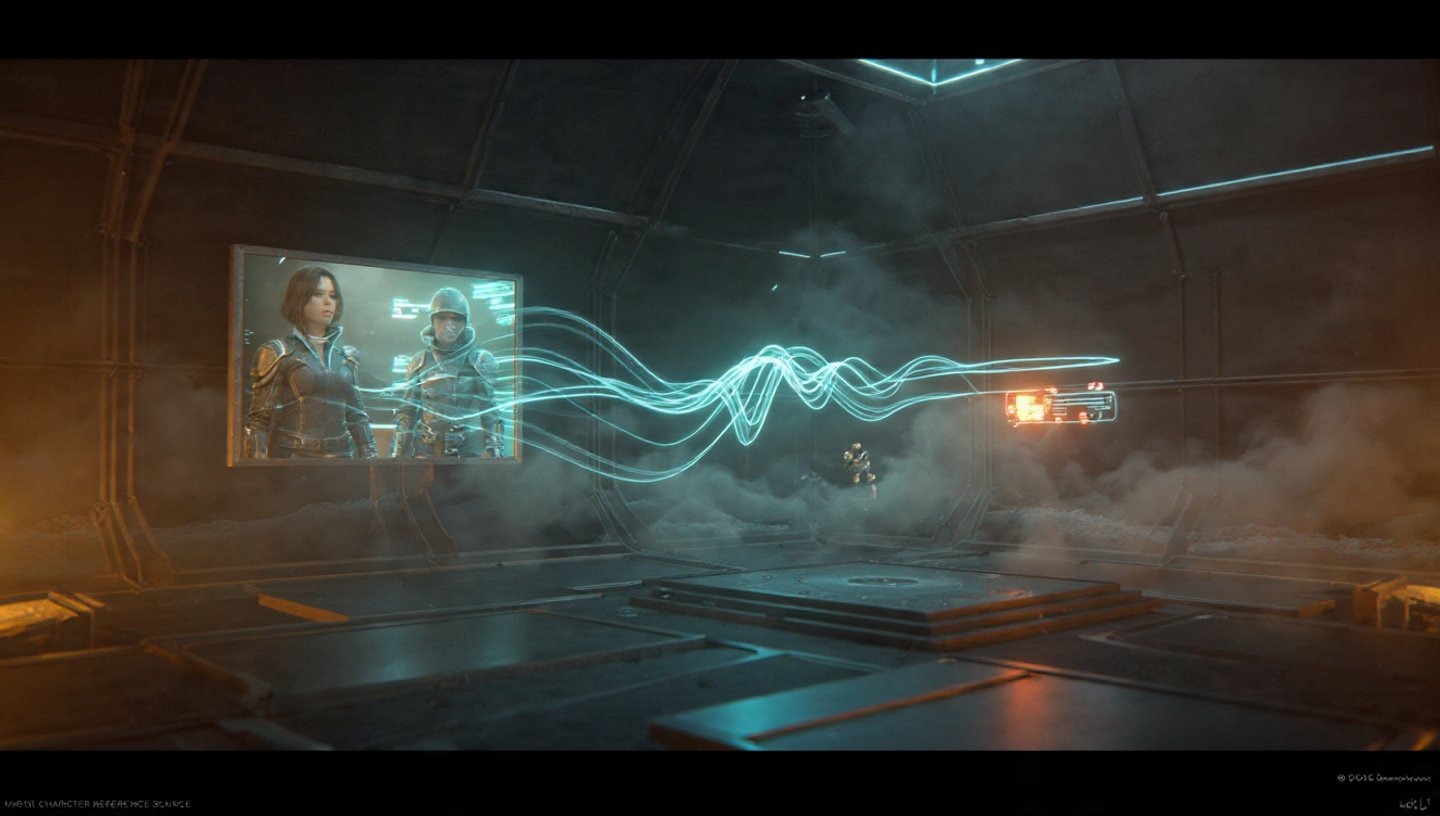

- The use of an initial, consistent image is a powerful form of Reference-to-Video, ensuring the AI incorporates detailed patterns and character traits across all frames.

Step 2: Animate with Constraints

The approved still image is then uploaded to the I2V platform (e.g., Runway, Kling AI) as the Start Frame (or conditioning image). The AI’s job shifts from “imagine this scene” to “animate this specific image according to my instructions.”

- Motion Prompt: You now provide instructions focused purely on movement (e.g., “Subtle camera dolly in, the wind gently moves the character’s hair, a soft blue light flickers on the left”). The visual details are preserved, and only the dynamics change.

- Consistency Leverage: Because the AI has a high-fidelity starting image to reference for every subsequent frame, it maintains texture, color, and character identity far more reliably than T2V.

End Frame Conditioning: The Seamless Transition

For advanced storytelling, the goal is often a seamless transition between two different states, moods, or products (e.g., a Day-to-Night transition, or a switch between two different product configurations).4 This is solved with End Frame Conditioning.

- How it Works: In addition to uploading your Start Image, you also upload a separate, specifically crafted End Image.5

- The AI’s Task: The AI generates intermediate frames to smoothly morph the start image into the end image, guided by the text prompt.

| State | Image Example | Purpose |

| Start Frame | Image of a car in bright sunlight. | Establishes the initial look and composition. |

| End Frame | Image of the same car in dark, neon-lit rain. | Sets the precise target visual for the transition. |

| Motion Prompt | “A sudden downpour begins, the sun sets quickly, and the vibrant red paint reflects the neon streetlights as the car’s headlights flash on.” | Instructs the AI on the narrative and timing of the change. |

This technique is essential for chaining shots together for longer narratives. Creators can build extended, multi-sequence videos with perfect continuity. They need to ensure the end frame of the first clip perfectly matches the start frame of the second clip.

In summary, the most effective AI Image-to-Video workflow is fundamentally an exercise in control and constraint. Effective AI video relies on control. By using stills to define the look and Start/End frames to guide the arc, creators solve consistency issues and ensure professional results.